Guest blog post by Dr Robert Mesibov

Proofreading the text of scientific papers isn’t hard, although it can be tedious. Are all the words spelled correctly? Is all the punctuation correct and in the right place? Is the writing clear and concise, with correct grammar? Are all the cited references listed in the References section, and vice-versa? Are the figure and table citations correct?

Proofreading of text is usually done first by the reviewers, and then finished by the editors and copy editors employed by scientific publishers. A similar kind of proofreading is also done with the small tables of data found in scientific papers, mainly by reviewers familiar with the management and analysis of the data concerned.

But what about proofreading the big volumes of data that are common in biodiversity informatics? Tables with tens or hundreds of thousands of rows and dozens of columns? Who does the proofreading?

Sadly, the answer is usually “No one”. Proofreading large amounts of data isn’t easy and requires special skills and digital tools. The people who compile biodiversity data often lack the skills, the software or the time to properly check what they’ve compiled.

The result is that a great deal of the data made available through biodiversity projects like GBIF is — to be charitable — “messy”. Biodiversity data often needs a lot of patient cleaning by end-users before it’s ready for analysis. To assist end-users, GBIF and other aggregators attach “flags” to each record in the database where an automated check has found a problem. These checks find the most obvious problems amongst the many possible data compilation errors. End-users often have much more work to do after the flags have been dealt with.

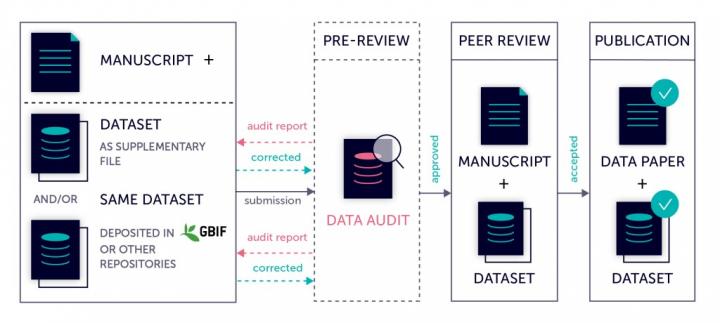

In 2017, Pensoft employed a data specialist to proofread the online datasets that are referenced in manuscripts submitted to Pensoft’s journals as data papers. The results of the data-checking are sent to the data paper’s authors, who then edit the datasets. This process has substantially improved many datasets (including those already made available through GBIF) and made them more suitable for digital re-use. At blog publication time, more than 200 datasets have been checked in this way.

Note that a Pensoft data audit does not check the accuracy of the data, for example, whether the authority for a species name is correct, or whether the latitude/longitude for a collecting locality agrees with the verbal description of that locality. For a more or less complete list of what does get checked, see the Data checklist at the bottom of this blog post. These checks are aimed at ensuring that datasets are correctly organised, consistently formatted and easy to move from one digital application to another. The next reader of a digital dataset is likely to be a computer program, not a human. It is essential that the data are structured and formatted, so that they are easily processed by that program and by other programs in the pipeline between the data compiler and the next human user of the data.

Pensoft’s data-checking workflow was previously offered only to authors of data paper manuscripts. It is now available to data compilers generally, with three levels of service:

- Basic: the compiler gets a detailed report on what needs fixing

- Standard: minor problems are fixed in the dataset and reported

- Premium: all detected problems are fixed in collaboration with the data compiler and a report is provided

Because datasets vary so much in size and content, it is not possible to set a price in advance for basic, standard and premium data-checking. To get a quote for a dataset, send an email with a small sample of the data topublishing@pensoft.net.

—

Data checklist

Minor problems:

- dataset not UTF-8 encoded

- blank or broken records

- characters other than letters, numbers, punctuation and plain whitespace

- more than one version (the simplest or most correct one) for each character

- unnecessary whitespace

- Windows carriage returns (retained if required)

- encoding errors (e.g. “Dum?ril” instead of “Duméril”)

- missing data with a variety of representations (blank, “-“, “NA”, “?” etc)

Major problems:

- unintended shifts of data items between fields

- incorrect or inconsistent formatting of data items (e.g. dates)

- different representations of the same data item (pseudo-duplication)

- for Darwin Core datasets, incorrect use of Darwin Core fields

- data items that are invalid or inappropriate for a field

- data items that should be split between fields

- data items referring to unexplained entities (e.g. “habitat is type A”)

- truncated data items

- disagreements between fields within a record

- missing, but expected, data items

- incorrectly associated data items (e.g. two country codes for the same country)

- duplicate records, or partial duplicate records where not needed

For details of the methods used, see the author’s online resources:

- A Data Cleaner’s Cookbook

- BASHing data (a weekly data blog)

***

Find more for Pensoft’s data audit workflow provided for data papers submitted to Pensoft journals on Pensoft’s blog.